RISE: Hardware Usability Testing for Dishwasher Features

Testing Dishwasher Features

Work together?

Get in touchCLIENT

RISE client project

ROLE

UX Researcher

DELIVERABLES

Test scenarios, documentation, summary report

TOOLS

Dovetail, RISE UX Lab

Note: The work is under NDA so I'm keeping details and images vague.

Brief

The client is a household appliance manufacturer and had hired RISE to conduct usability studies of new features in a dishwasher, to identify problems with affordance and usability of two new features.

Core Problem

Writing test scenarios that isolate specific machine functions and grade them into different failing/passing states is tricky, as is using unambiguous language that all stakeholders interpret similarly, especially in an international team spread across the world.

Methodology & My Contribution

Five test participants were invited to RISE UX Lab in Stockholm to perform scenarios under remote observation by UX researchers and client stakeholders. Subsequent tagging and thematic analysis are compiled into insights for the client to incorporate into their design work.

Why observation over surveys? Users cannot reliably articulate what confuses them about physical interfaces — they can tell you they're frustrated, but not why. We needed to watch actual behavior: where users looked, what they tried first, how long they searched, and what assumptions they made. This observational approach revealed not just that features were hard to find, but why: color blending, unexpected placement, lack of visual hierarchy.

I assisted the senior researchers with writing test scenarios and measuring level of failure/success, worked during user testing and post-test analysis with the client, thematic analysis and tagging in Dovetail, proofreading of the final client report.

Results

Our findings identified critical affordance issues before the expensive manufacturing phase. The design team took these insights to inform iterative improvements within their strict budget constraints.

Business impact: For hardware manufacturers, design iterations post-manufacturing can cost millions when scaled across production runs. Finding these issues at the prototype stage, before committing to molds and tooling, enabled the client to make informed trade-off decisions between design clarity, material costs, and instructional mitigation.

While specific design changes remain confidential, the research prevented launching features users couldn't discover, which would have resulted in customer dissatisfaction and increased support costs.

Key Findings

Wine Glass Holder Affordance

None of the 5 participants used the wine glass holder as intended. Only 2 out of 5 found the function without prompting.

Research approach: We used a three-level prompting strategy to distinguish affordance ("do users discover it?") from usability ("can they use it if shown?"):

- Level 1: "Place wine glasses where you think they fit" (open-ended)

- Level 2: "Is there anywhere else they might fit?" (gentle nudge)

- Level 3: "What do you think that function in the back is for?" (direct guidance)

This progressive disclosure method allowed us to measure both discoverability and comprehension separately.

Modular Tray Discovery

The modular tray proved similarly challenging, with 3 out of 5 participants finding it independently; One because they had a similar feature on their current machine, the others after more intense prompting.

Critical insight: Multiple users thought they had broken the mechanism and couldn't reset it. User comments revealed that control mechanisms blended into the machine's color scheme, making it unclear "what to pull, what to push."

This finding highlighted not just a discovery problem, but a feedback and reversibility issue — users feared breaking expensive equipment.

Preparation

The client provided a brief with a description of the two features they wanted to test for usability. It was our job to translate the somewhat ambiguous wording into a test protocol that would go through both features in approximately 40 minutes, with multiple pass/fail states for each test.

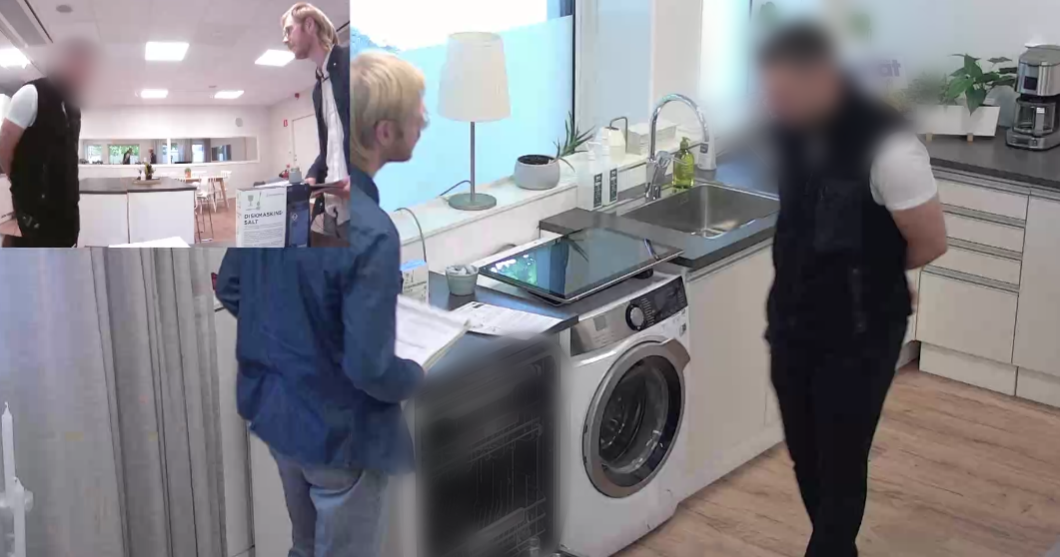

The client delivered the dishwasher to the UX Lab in Stockholm and the local team set it up along with all the utensils and dishes to be used.

Once the test protocol was written, we conducted a pre-test with one test participant, and based on the results and feedback from the client, we revised the protocol - "leave tray A in upright position at start as a clue" and "before you fail the user completely, add a second demonstration trigger", etc.

Test Day

On the day, we had five test participants who went through a guided test scenario individually, guided by a senior researcher on site. I and another researcher took notes remotely, and a couple of client stakeholders also participated. Each test took approximately 40 minutes, and was followed by a half-hour debriefing with the client where we compared notes.

The clients have been conducting these tests for many years and know that the best feedback is seeing people get frustrated and fail - a neutral test protocol and a detached test administrator are an excellent way to produce these results.

Even when test participants completely failed a task, they often gave the features full marks in the exit survey - "yes, now that I know the point of it I really like the idea" - so if you want to improve a product, it's better to look at what people do, rather than what they say.

People don't want to be perceived as either rude or stupid, so their tendency is to see their failures to understand something as a personal oversight, not the designer's fault. Hence the term "user error" that comes up when we try to explain mishaps and accidents, instead of placing the blame squarely on those who didn't test their products well enough.

Summary & Report

When conducting observational studies, it's important to be aware of one's biases and tendency to derive intent instead of just going by observed facts - after all, we want to know what happened, not guess what users were thinking - so rigorous coding and compilation of results is important.

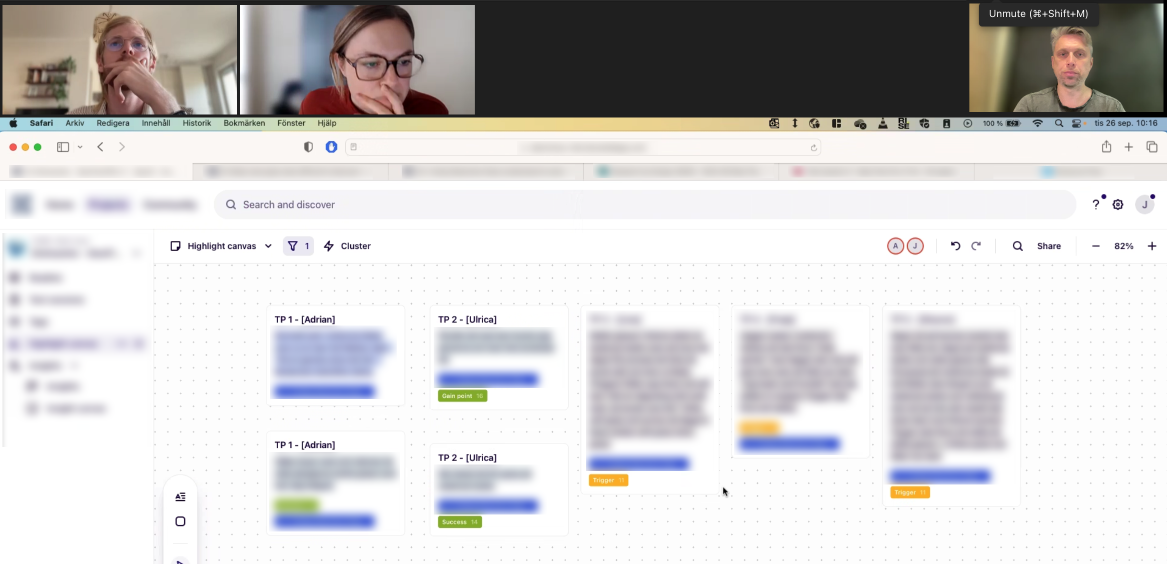

Analysis methodology: Following each test session, the RISE team and client stakeholders conducted immediate debriefing sessions to align on observations. We then coded video recordings and notes in Dovetail, using thematic analysis to identify recurring patterns across all 5 participants. Three primary usability clusters emerged, each documented with specific user quotes and behavioral evidence.

When the tests were complete, we had observation notes from three researchers as well as comments from client stakeholders, and it was time to group, tag and summarize the material. Using Dovetail for clustering and thematic analysis, we summarized the results in a report with actionable insights and delivered them to the client.

Testing takes time and costs money, but the cost of implementing even seemingly simple features that turn out to be flawed or unusable far outweighs all pre-test costs, and it was fascinating to be part of the process with such an experienced team, both at RISE and the client.

I received a nice evaluation for my work!

I prepared a usability test together with Mateusz during his time at RISE. The idea was to show him how I conduct usability tests in the UX lab, but it ended up with Mateusz being able to assist me and help me with all tasks excellently. Mateusz developed appropriate tasks for the product test, wrote scripts and triggers, observed and took notes during test sessions. Then he actively participated with me and the design team in the analysis work and wrote a final report. Mateusz performed all this in an exemplary manner, showed his knowledge of theory and asked the right questions to deliver in a high-pressure situation for a major client. I warmly recommend Mateusz to anyone considering him for their future team!

Johan Rindborg, Senior Usability Tester at RISE – on my LinkedIn profile